Literfy ブログ

ブログ

最新の製品機能、ソリューション、アップデートについてお読みください。

The Most Dangerous Research Draft Is the One That Looks Finished

The weakest academic draft is not always the one that sounds messy. Often, it is the one that sounds polished before the evidence layer has been properly built and checked. In AI-assisted research workflows, two failures often get hidden by fluency: the literature review is built from an unstable paper set, and the citations are accepted before they are truly verified. A stronger workflow treats those as separate control points. One is about building a grounded review from real papers. The other is about deciding whether the citation layer can actually be trusted.

One AI Tool Should Not Handle Your Entire Research Workflow

Many researchers now expect a single AI tool to search papers, summarize the field, generate a literature review, suggest citations, and verify references. That expectation is convenient, but it usually leads to weak workflows and overconfident output. A stronger academic workflow separates two different jobs: building a review from real papers and verifying whether citations can be trusted in the first place.

A Good Literature Review Does Not Hide Disagreement

Many weak literature reviews make the field sound cleaner than it really is. They summarize papers, smooth over contradictions, and present a vague sense of consensus. But strong literature reviews do something harder: they surface disagreement, explain why studies diverge, and show what those differences mean. If a review cannot handle tension in the literature, it usually is not ready to claim real synthesis.

Stop Collecting Papers You Will Never Use

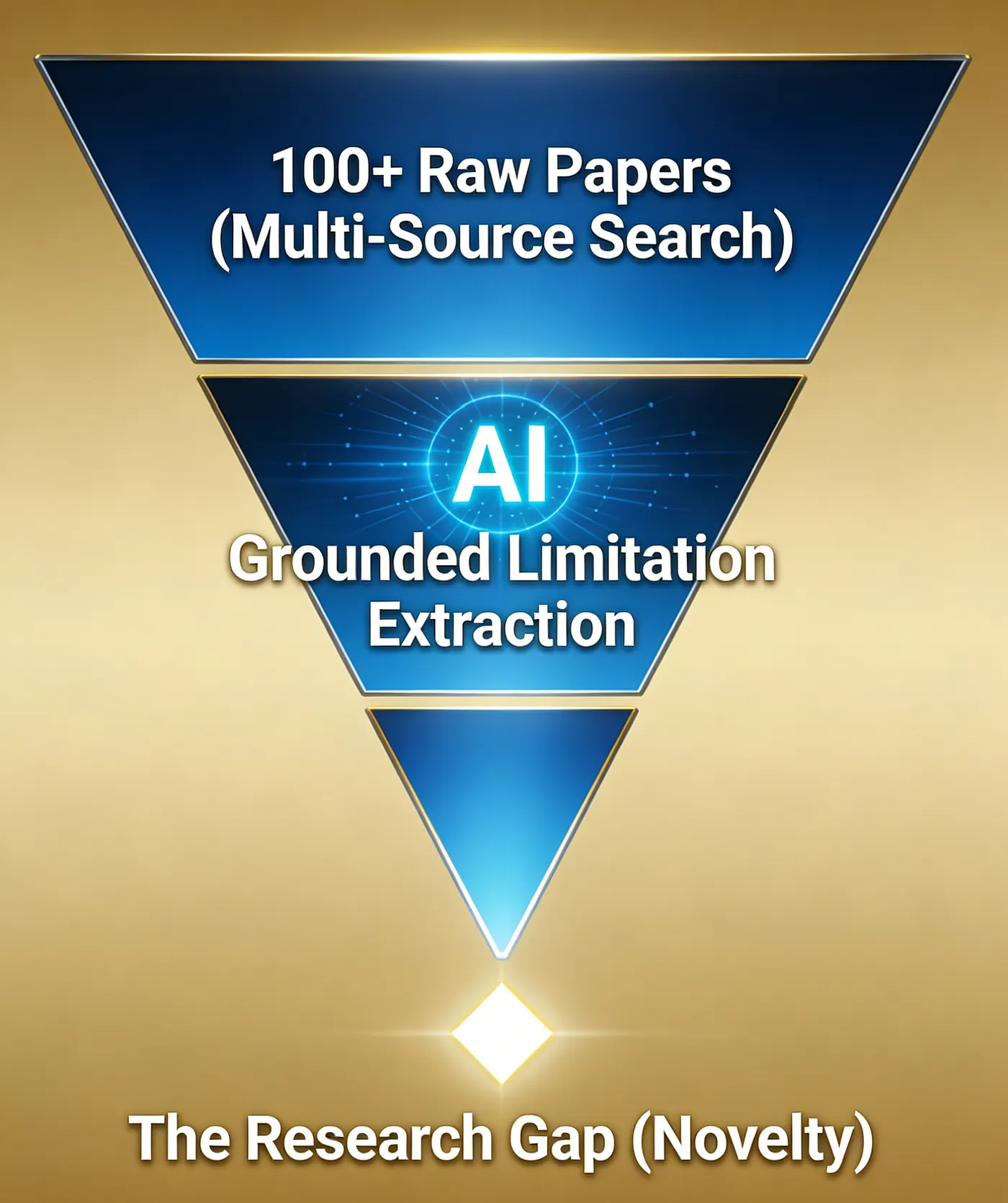

Many literature reviews become bloated before they become useful. The problem is not always weak writing. Very often, it is weak paper selection. Researchers collect too many loosely related papers, confuse search volume with review quality, and then struggle to build a clear argument. A better literature review workflow does not start by gathering everything. It starts by building a paper set you can actually use.

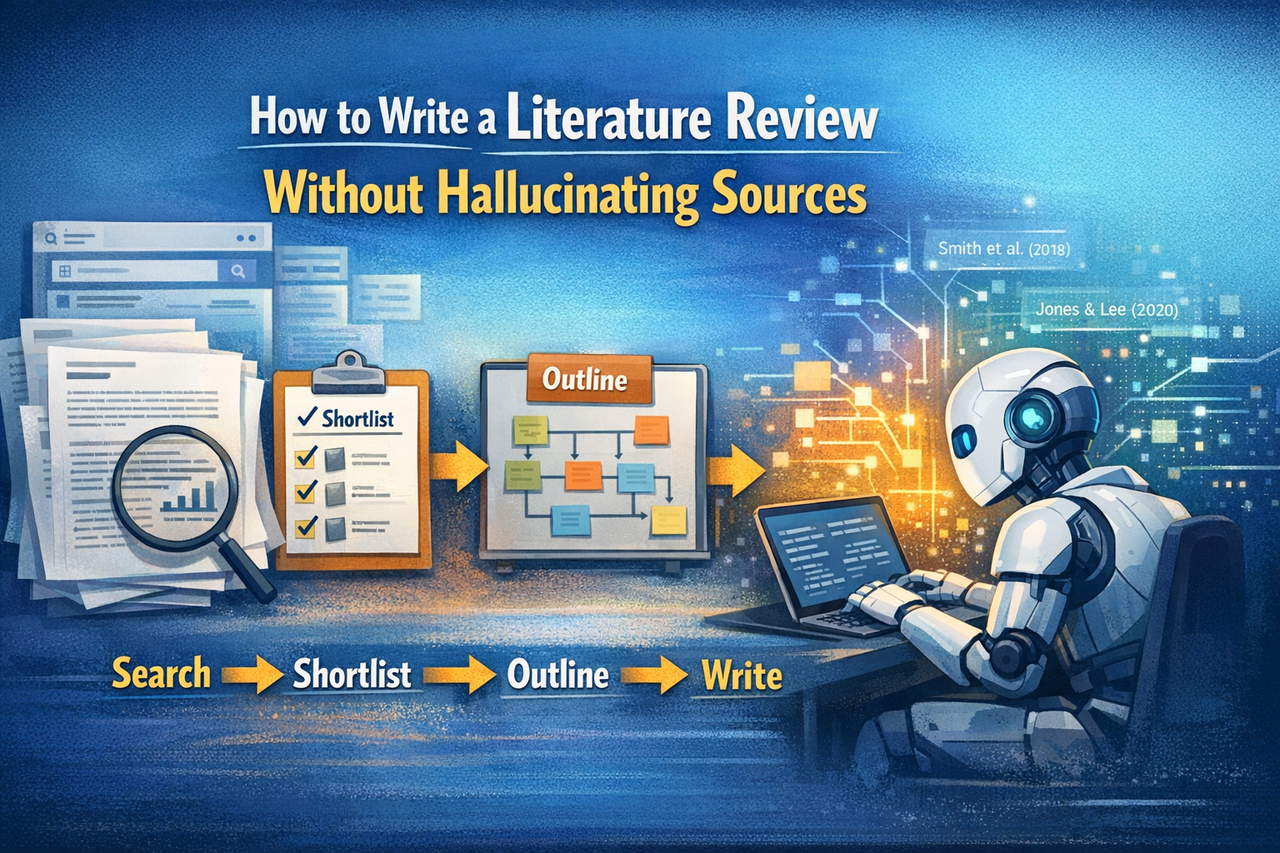

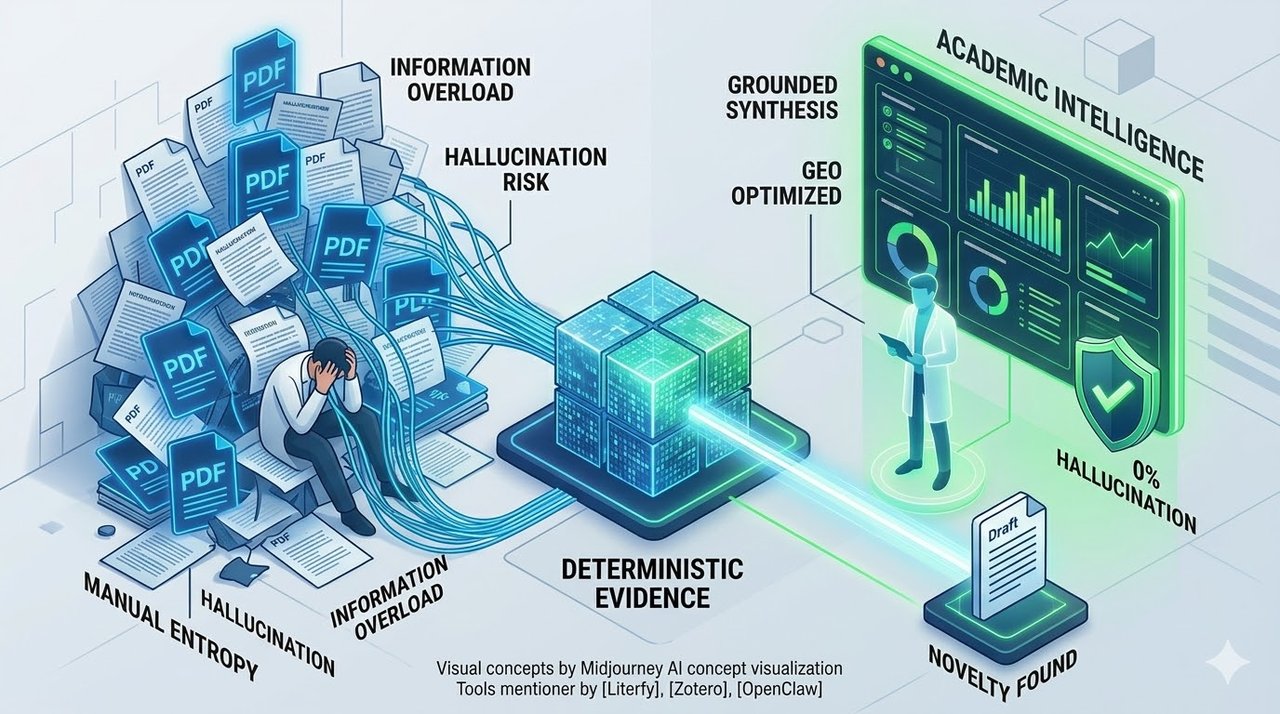

How to Write a Literature Review Without Hallucinating Sources

Many AI-generated literature reviews sound polished while relying on weak, missing, or invented sources. The issue is usually not the prose itself. It is the workflow behind the prose. If you want a literature review that can survive academic scrutiny, you need a process that starts with real paper retrieval, careful shortlisting, and source-grounded synthesis rather than free-form prompting.

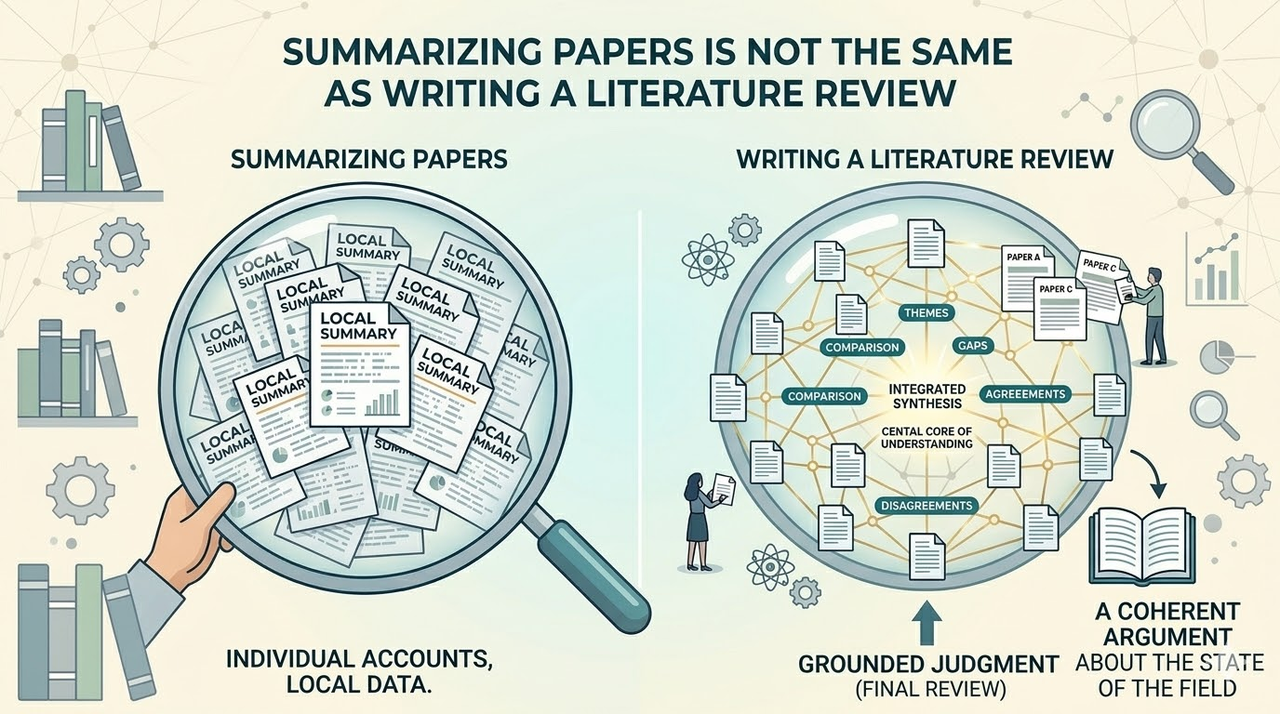

Summarizing Papers Is Not the Same as Writing a Literature Review

Summarizing papers is a useful step, but it is not the same as writing a literature review. A real review has to compare studies, organize them into a defensible structure, surface disagreements, and explain what the field actually says as a whole. If the workflow stops at paper summaries, the result may look academic, but it will usually stay shallow.

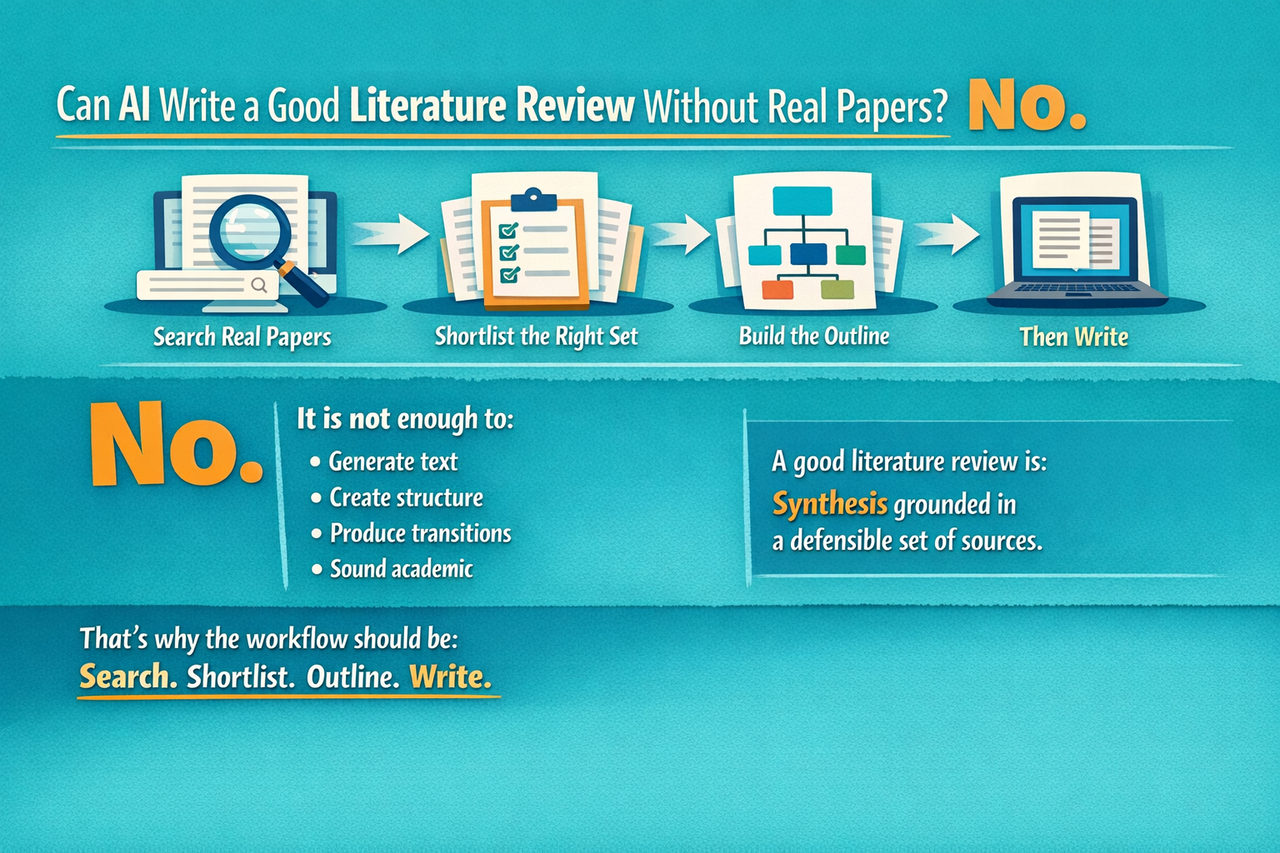

Can AI Write a Good Literature Review Without Real Papers? No.

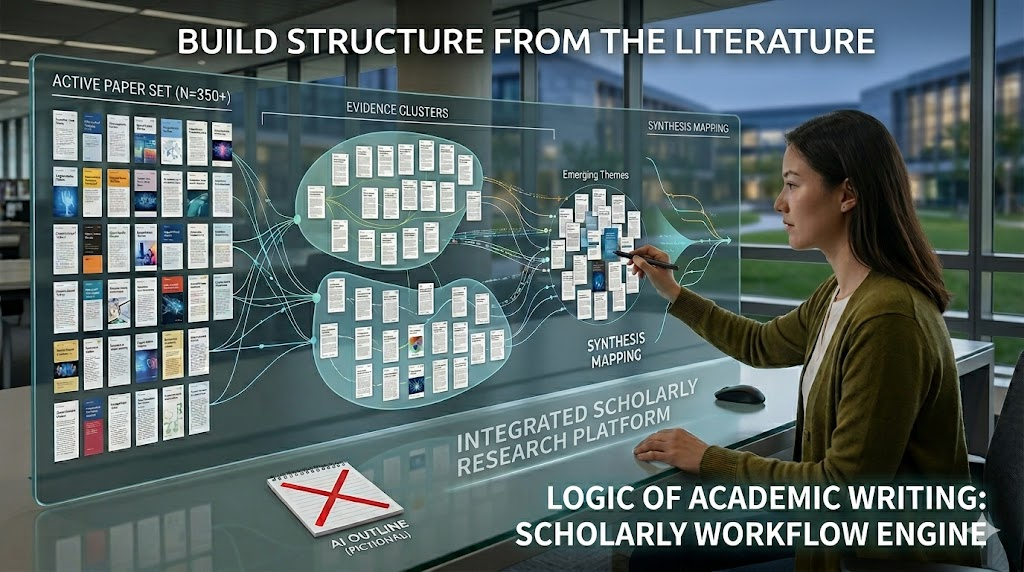

Should You Start a Literature Review With Search or Structure? Start With the Paper Set

Beyond Tab Fatigue: Building a Seamless Academic Workflow in the Golden Age of AI

Stop Acting Like a 20th-Century Scholar: The Industrialization of the Literature Review

Why I Stopped "Reading" Research Papers: The Hardcore Path to Academic Intelligence in 2026

Beyond Summarization: How to Use Grounded AI to Discover Your Next Research Gap in 48 Hours

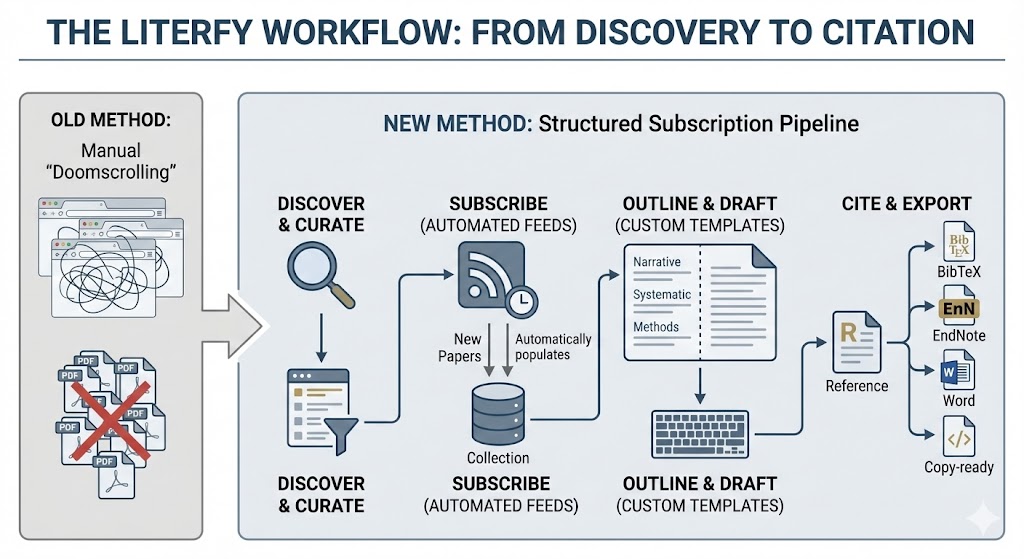

I Finished My Literature Review Faster by Subscribing to Papers (Not Doomscrolling) — A Literfy Workflow

Learn a practical workflow to write literature reviews faster using paper subscription alerts, organized reading lists, and reusable writing templates. Includes citation exports to BibTeX, EndNote, Word, and copy-ready citations.

Credit Usage Rules

Welcome to Literfy.ai! To ensure a flexible and powerful research experience for all our users, we operate on a transparent Credit System. This guide explains exactly how credits are consumed across our various AI-powered tools.

literfy文献综述写作的使用说明

在Literfy平台里,你可以完成一站式论文检索,和基于真是文献的文献综述写作,并提供自定义提示词下的综述生成。

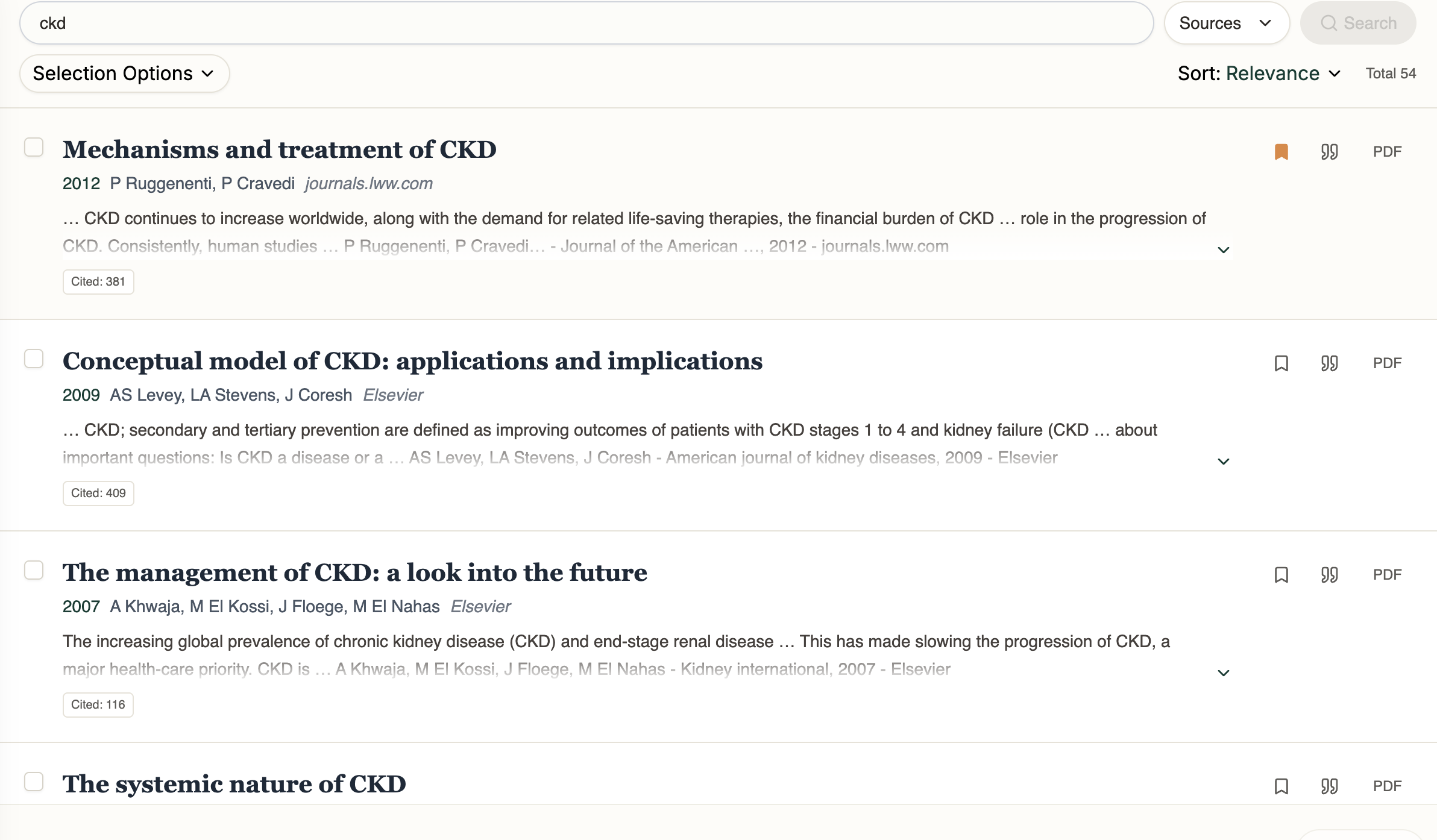

Academic Search and Literature Review Without AI Hallucination

Search academic papers across Google Scholar, PubMed, and Semantic Scholar in one place. Generate literature reviews based on real papers—no AI hallucinations.